A groundbreaking study published in Nature Neuroscience demonstrates that brain-computer interfaces can effectively restore selective hearing in noisy environments. For the first time, researchers have successfully used real-time neural signals to automatically amplify a specific voice while suppressing background noise, offering a potential solution for the 430 million people worldwide living with disabling hearing loss.

The Challenge of the “Cocktail Party Effect”

One of the most persistent hurdles in auditory neuroscience is the “cocktail party problem”—the difficulty humans face in isolating a single speaker amidst a crowd of overlapping voices and background noise.

While normal hearing relies on the brain’s natural ability to filter sound based on attention, current hearing aids largely fail to replicate this sophistication. Traditional devices typically amplify all incoming sound indiscriminately. This lack of selectivity often degrades speech clarity in complex environments, leading to user frustration, low adoption rates, and increased social isolation for those with hearing impairments.

“We have developed a system that acts as a neural extension of the user, leveraging the brain’s natural ability to filter through all the sounds in a complex environment to dynamically isolate the specific conversation they wish to hear,” explained Dr. Nima Mesgarani of Columbia University.

How the Technology Works

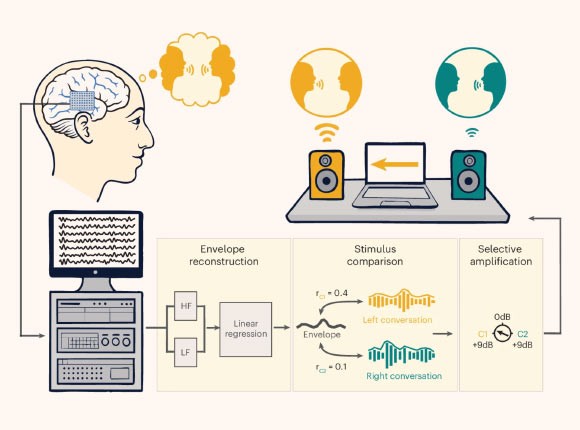

The study, led by Dr. Mesgarani and Dr. Vishal Choudhari, utilized a unique opportunity provided by epilepsy patients undergoing surgery to pinpoint seizure sources. These volunteers already had intracranial electrodes implanted, allowing researchers to measure brain activity with high precision.

During the trials, participants listened to two overlapping conversations simultaneously. The system employed real-time machine-learning algorithms to analyze the subjects’ brainwaves and determine which conversation they were actively focusing on.

Once the system identified the target voice, it instantly adjusted the audio output:

* Amplified the voice the listener was attending to.

* Suppressed the competing voice and background noise.

This process occurred dynamically, whether the subjects were guided to focus on a specific speaker or chose freely, mimicking real-world conversational choices.

From Theory to Practical Application

The primary breakthrough lies in the system’s speed and stability. For brain-controlled hearing aids to be viable, they must process neural data instantly to feel natural to the user.

Dr. Vishal Choudhari emphasized the significance of this shift: “The central unanswered question was whether brain-controlled hearing technology could move beyond incremental advances, towards a prototype that could help someone hear better in real time.”

The results were striking. One participant was so surprised by the seamless integration of the technology that she accused researchers of manually adjusting the volumes behind her back. Others described the experience as “science fiction,” noting the profound potential for friends and family members struggling with hearing loss.

A New Era for Hearing Technology

This research marks a critical transition from theoretical models to practical, real-world applications. By moving beyond simple amplification to selective enhancement based on neural intent, this technology promises to restore the sophisticated filtering capabilities of the human brain.

As the field advances, these findings suggest a future where hearing assistance is not just about volume, but about clarity and connection, potentially reducing the social barriers faced by millions of people with hearing impairments.